1、Kubectl debug 设置一个临时容器

2、Sidecar

3、Volume:更改目录权限,fsGroup

4、ConfigMap和Secret

K8S官网:https://kubernetes.io/docs/setup/

最新版高可用安装:https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/high-availability/

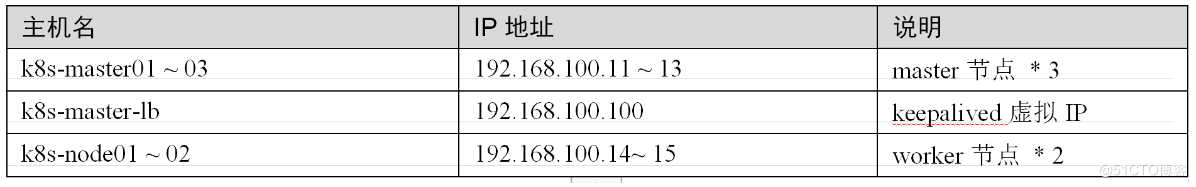

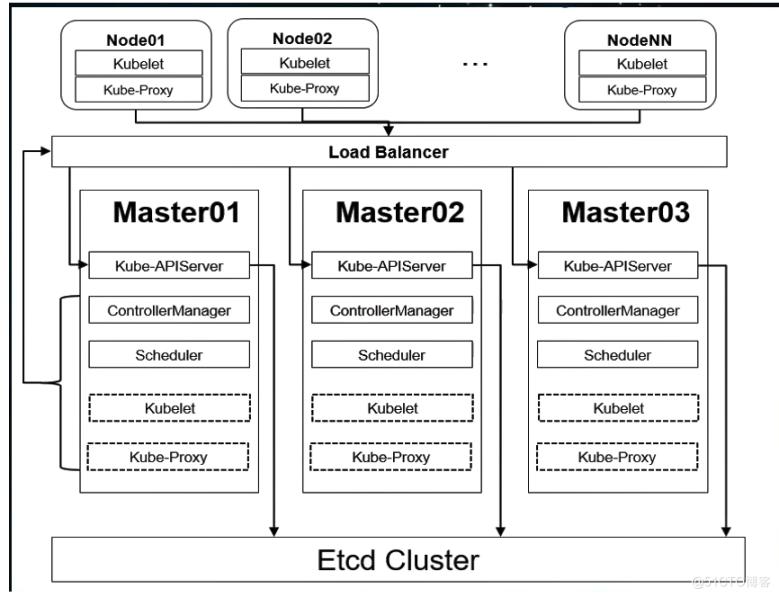

k8s 的高可用的架构图

!

!

所有节点配置hosts,修改/etc/hosts如下:

cat /etc/hosts

----

192.168.100.11 node01.flyfish.cn

192.168.100.12 node02.flyfish.cn

192.168.100.13 node03.flyfish.cn

192.168.100.14 node04.flyfish.cn

192.168.100.15 node05.flyfish.cn

192.168.100.16 node06.flyfish.cn

192.168.100.17 node07.flyfish.cn

192.168.100.18 node08.flyfish.cn

----curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-7.repo

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

sed -i -e '/mirrors.cloud.aliyuncs.com/d' -e '/mirrors.aliyuncs.com/d' /etc/yum.repos.d/CentOS-Base.repo !

!

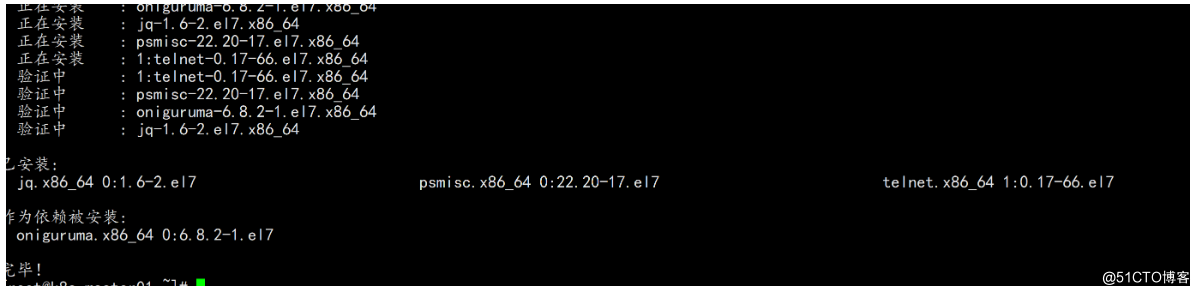

必备工具安装:

yum install wget jq psmisc vim net-tools telnet yum-utils device-mapper-persistent-data lvm2 git -y

所有节点关闭防火墙、selinux、dnsmasq、swap。服务器配置如下:

systemctl disable --now firewalld

systemctl disable --now dnsmasq

systemctl disable --now NetworkManager

setenforce 0

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/sysconfig/selinux

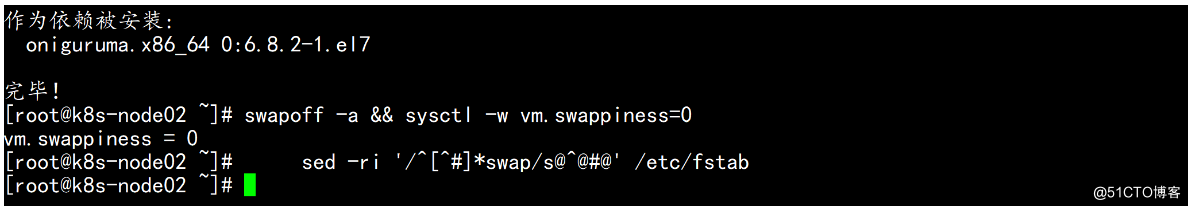

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config关闭swap分区 (全部节点)

swapoff -a && sysctl -w vm.swappiness=0

sed -ri '/^[^#]*swap/s@^@#@' /etc/fstab

安装ntpdate

rpm -ivh http://mirrors.wlnmp.com/centos/wlnmp-release-centos.noarch.rpm

yum install ntpdate -y

所有节点同步时间。时间同步配置如下:

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

echo 'Asia/Shanghai' >/etc/timezone

ntpdate time2.aliyun.com

加入到crontab

*/5 * * * * ntpdate time2.aliyun.com

所有节点配置limit:

ulimit -SHn 65535

vim /etc/security/limits.conf

# 末尾添加如下内容

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited安装ntpdate

rpm -ivh http://mirrors.wlnmp.com/centos/wlnmp-release-centos.noarch.rpm

yum install ntpdate -y

所有节点同步时间。时间同步配置如下:

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

echo 'Asia/Shanghai' >/etc/timezone

ntpdate time2.aliyun.com

加入到crontab

*/5 * * * * ntpdate time2.aliyun.com

所有节点配置limit:

ulimit -SHn 65535

vim /etc/security/limits.conf

# 末尾添加如下内容

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

Master01节点免密钥登录其他节点:

ssh-keygen -t rsa

for i in k8s-master01.flyfish.cn k8s-master02.flyfish.cn k8s-master03.flyfish.cn k8s-node01.flyfish.cn k8s-node02.flyfish.cn;do ssh-copy-id -i .ssh/id_rsa.pub $i;done

所有节点升级系统并重启:

yum update -y && reboot下载安装源码文件:

cd /root/ ; git clone https://github.com/dotbalo/k8s-ha-install.git

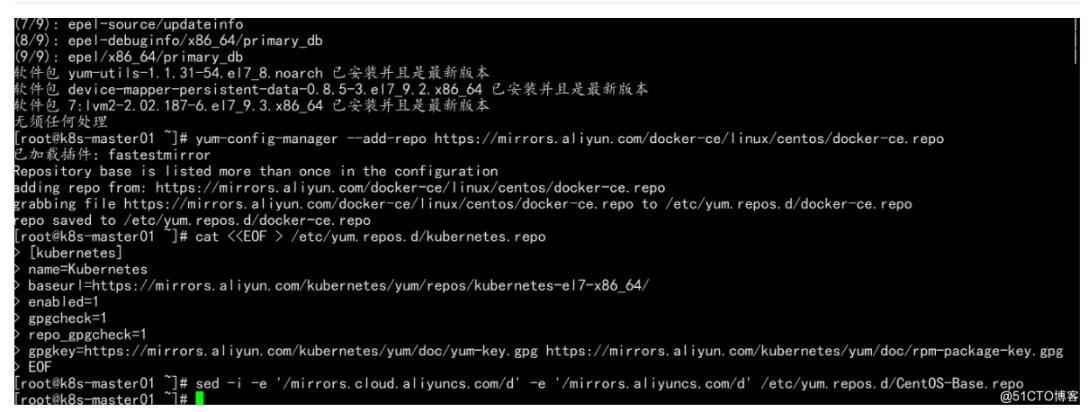

CentOS 7安装yum源如下:

curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-7.repo

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

sed -i -e '/mirrors.cloud.aliyuncs.com/d' -e '/mirrors.aliyuncs.com/d' /etc/yum.repos.d/CentOS-Base.repo

CentOS 8 安装源如下:

curl -o /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-8.repo

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

sed -i -e '/mirrors.cloud.aliyuncs.com/d' -e '/mirrors.aliyuncs.com/d' /etc/yum.repos.d/CentOS-Base.repo所有节点升级系统并重启,此处升级没有升级内核,下节会单独升级内核:

yum install wget jq psmisc vim net-tools telnet yum-utils device-mapper-persistent-data lvm2 -y

yum update -y --exclude=kernel* && reboot #CentOS7需要升级,8不需要

1.1.2 内核配置

CentOS7 需要升级内核至4.18+

https://www.kernel.org/ 和 https://elrepo.org/linux/kernel/el7/x86_64/

CentOS 7 dnf可能无法安装内核

dnf --disablerepo=\* --enablerepo=elrepo -y install kernel-ml kernel-ml-devel

grubby --default-kernel

使用如下方式安装最新版内核

rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-2.el7.elrepo.noarch.rpm

查看最新版内核yum --disablerepo="*" --enablerepo="elrepo-kernel" list available

[root@k8s-node01 ~]# yum --disablerepo="*" --enablerepo="elrepo-kernel" list available

Loaded plugins: fastestmirror

Loading mirror speeds from cached hostfile

* elrepo-kernel: mirrors.neusoft.edu.cn

elrepo-kernel | 2.9 kB 00:00:00

elrepo-kernel/primary_db | 1.9 MB 00:00:00

Available Packages

elrepo-release.noarch 7.0-5.el7.elrepo elrepo-kernel

kernel-lt.x86_64 4.4.229-1.el7.elrepo elrepo-kernel

kernel-lt-devel.x86_64 4.4.229-1.el7.elrepo elrepo-kernel

kernel-lt-doc.noarch 4.4.229-1.el7.elrepo elrepo-kernel

kernel-lt-headers.x86_64 4.4.229-1.el7.elrepo elrepo-kernel

kernel-lt-tools.x86_64 4.4.229-1.el7.elrepo elrepo-kernel

kernel-lt-tools-libs.x86_64 4.4.229-1.el7.elrepo elrepo-kernel

kernel-lt-tools-libs-devel.x86_64 4.4.229-1.el7.elrepo elrepo-kernel

kernel-ml.x86_64 5.7.7-1.el7.elrepo elrepo-kernel

kernel-ml-devel.x86_64 5.7.7-1.el7.elrepo elrepo-kernel

kernel-ml-doc.noarch 5.7.7-1.el7.elrepo elrepo-kernel

kernel-ml-headers.x86_64 5.7.7-1.el7.elrepo elrepo-kernel

kernel-ml-tools.x86_64 5.7.7-1.el7.elrepo elrepo-kernel

kernel-ml-tools-libs.x86_64 5.7.7-1.el7.elrepo elrepo-kernel

kernel-ml-tools-libs-devel.x86_64 5.7.7-1.el7.elrepo elrepo-kernel

perf.x86_64 5.7.7-1.el7.elrepo elrepo-kernel

python-perf.x86_64 5.7.7-1.el7.elrepo elrepo-kernel

安装最新版:

yum --enablerepo=elrepo-kernel install kernel-ml kernel-ml-devel –y

安装完成后reboot

更改内核顺序:

grub2-set-default 0 && grub2-mkconfig -o /etc/grub2.cfg && grubby --args="user_namespace.enable=1" --update-kernel="$(grubby --default-kernel)" && reboot

开机后查看内核

[appadmin@k8s-node01 ~]$ uname -a

Linux k8s-node01 5.7.7-1.el7.elrepo.x86_64 #1 SMP Wed Jul 1 11:53:16 EDT 2020 x86_64 x86_64 x86_64 GNU/Linux

CentOS 8按需升级:

可以采用dnf升级,也可使用上述同样步骤升级(使用上述步骤注意elrepo-release-8.1版本)

rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

dnf install https://www.elrepo.org/elrepo-release-8.1-1.el8.elrepo.noarch.rpm

dnf --disablerepo=\* --enablerepo=elrepo -y install kernel-ml kernel-ml-devel

grubby --default-kernel && reboot

安装依赖包:

本所有节点安装ipvsadm:

yum install ipvsadm ipset sysstat conntrack libseccomp -y

所有节点配置ipvs模块,在内核4.19+版本nf_conntrack_ipv4已经改为nf_conntrack,本例安装的内核为4.18,使用nf_conntrack_ipv4即可:

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

cat /etc/modules-load.d/ipvs.conf

ip_vs

ip_vs_rr

ip_vs_wrr

ip_vs_sh

nf_conntrack_ipv4

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

然后执行systemctl enable --now systemd-modules-load.service即可开启一些k8s集群中必须的内核参数,所有节点配置k8s内核:

cat <<EOF > /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

sysctl --system

1.1.3 基本组件安装

本节主要安装的是集群中用到的各种组件,比如Docker-ce、Kubernetes各组件等。

查看可用docker-ce版本:

yum list docker-ce.x86_64 --showduplicates | sort -r

[root@k8s-master01 k8s-ha-install]# wget https://download.docker.com/linux/centos/7/x86_64/edge/Packages/containerd.io-1.2.13-3.2.el7.x86_64.rpm

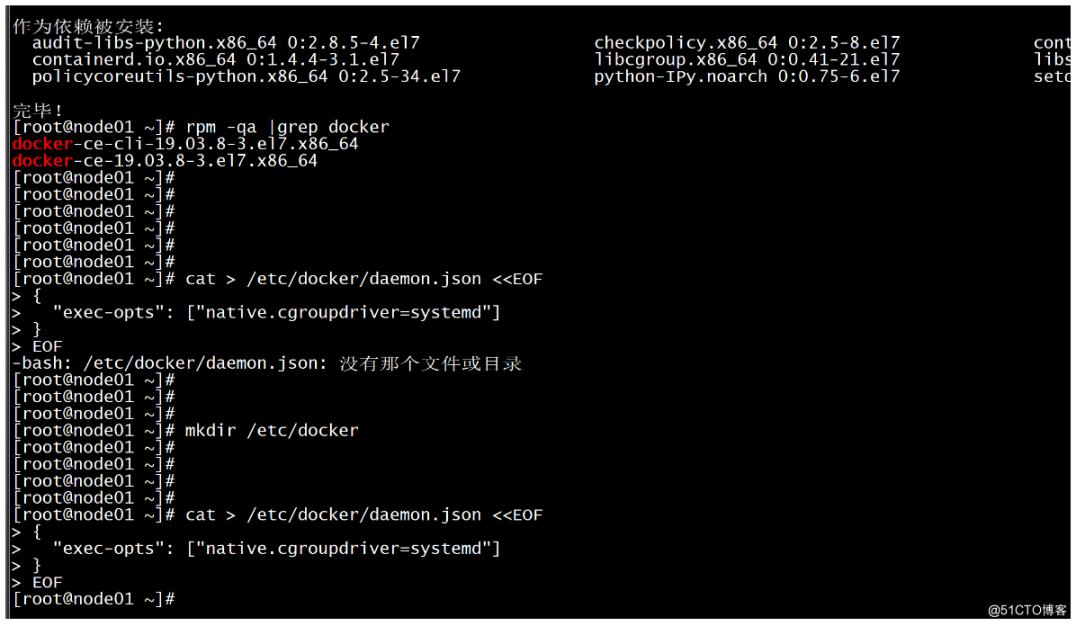

安装 docker-ce 19.03 版本:

yum install -y docker-ce-cli-19.03.8-3.el7.x86_64 docker-ce-19.03.8-3.el7.x86_64温馨提示:

由于新版kubelet建议使用systemd,所以可以把docker的CgroupDriver改成systemd

cat > /etc/docker/daemon.json <<EOF

{

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

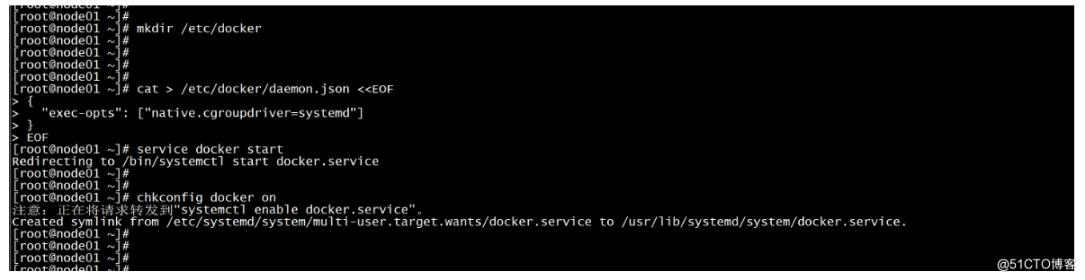

启动docker

service docker start

chkconfig docker on

!

!

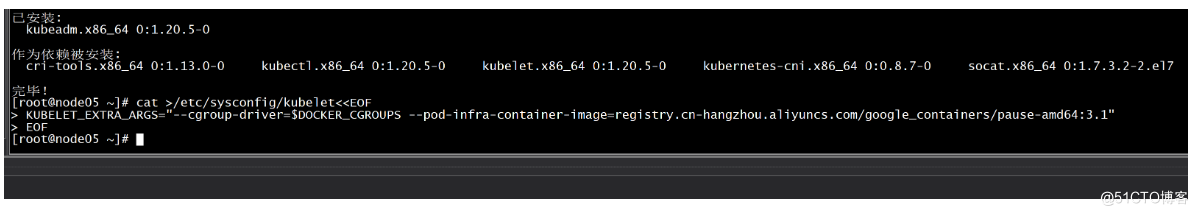

安装k8s组件:

yum list kubeadm.x86_64 --showduplicates | sort -r

所有节点安装最新版本kubeadm:

yum install kubeadm -y

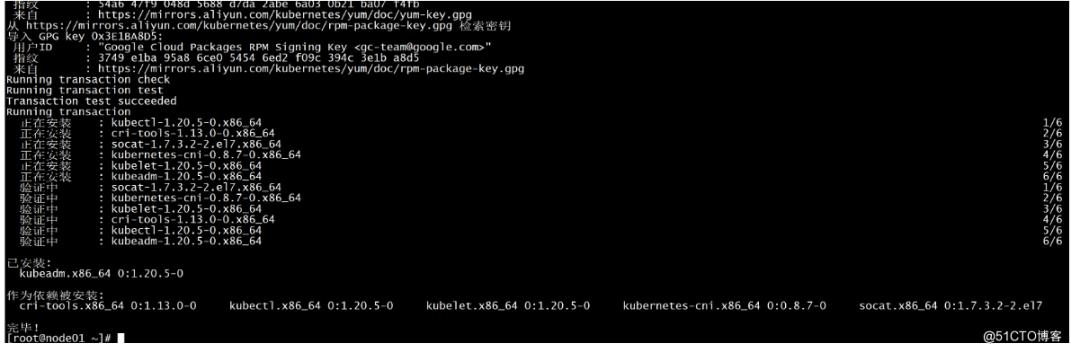

所有节点安装指定版本k8s组件:

yum install -y kubeadm-1.20.5-0.x86_64 kubectl-1.20.5-0.x86_64 kubelet-1.20.5-0.x86_64

所有节点设置开机自启动Docker:

systemctl daemon-reload && systemctl enable --now docker

默认配置的pause镜像使用gcr.io仓库,国内可能无法访问,所以这里配置Kubelet使用阿里云的pause镜像:

DOCKER_CGROUPS=$(docker info | grep 'Cgroup' | cut -d' ' -f4)

cat >/etc/sysconfig/kubelet<<EOF

KUBELET_EXTRA_ARGS="--cgroup-driver=$DOCKER_CGROUPS --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.1"

EOF

–

设置Kubelet开机自启动:

systemctl daemon-reload

systemctl enable --now kubelet1.1.4 高可用组件安装

所有Master节点通过yum安装HAProxy和KeepAlived:

yum install keepalived haproxy -y

所有Master节点配置HAProxy(详细配置参考HAProxy文档,所有Master节点的HAProxy配置相同):

[root@k8s-master01 etc]# mkdir /etc/haproxy

[root@k8s-master01 etc]# vim /etc/haproxy/haproxy.cfg

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults

log global

mode http

option httplog

timeout connect 5000

timeout client 50000

timeout server 50000

timeout http-request 15s

timeout http-keep-alive 15s

frontend monitor-in

bind *:33305

mode http

option httplog

monitor-uri /monitor

listen stats

bind *:8006

mode http

stats enable

stats hide-version

stats uri /stats

stats refresh 30s

stats realm Haproxy\ Statistics

stats auth admin:admin

frontend k8s-master

bind 0.0.0.0:16443

bind 127.0.0.1:16443

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend k8s-master

backend k8s-master

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server node01.flyfish.cn 192.168.100.11:6443 check

server node02.flyfish.cn 192.168.100.12:6443 check

server node03.flyfish.cn 192.168.100.13:6443 check

----

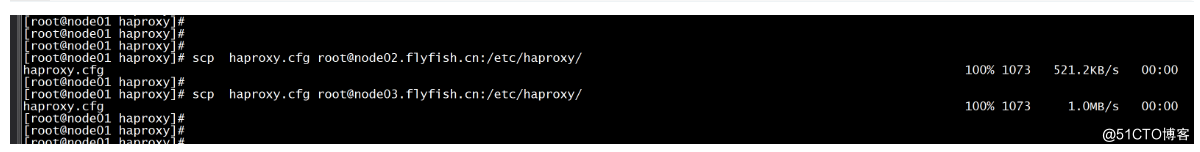

三台机器的配置是一样的:

scp haproxy.cfg root@node02.flyfish.cn:/etc/haproxy/

scp haproxy.cfg root@node03.flyfish.cn:/etc/haproxy/

Master01节点的配置:

[root@k8s-master01 etc]# mkdir /etc/keepalived

[root@k8s-master01 ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 2

weight -5

fall 3

rise 2

}

vrrp_instance VI_1 {

state MASTER

interface ens33

mcast_src_ip 192.168.100.11

virtual_router_id 51

priority 100

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

192.168.100.200

}

track_script {

chk_apiserver

}

}Master02节点的配置:

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 2

weight -5

fall 3

rise 2

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

mcast_src_ip 192.168.100.12

virtual_router_id 51

priority 101

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

192.168.100.200

}

track_script {

chk_apiserver

}

}Master03节点的配置:

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 2

weight -5

fall 3

rise 2

}

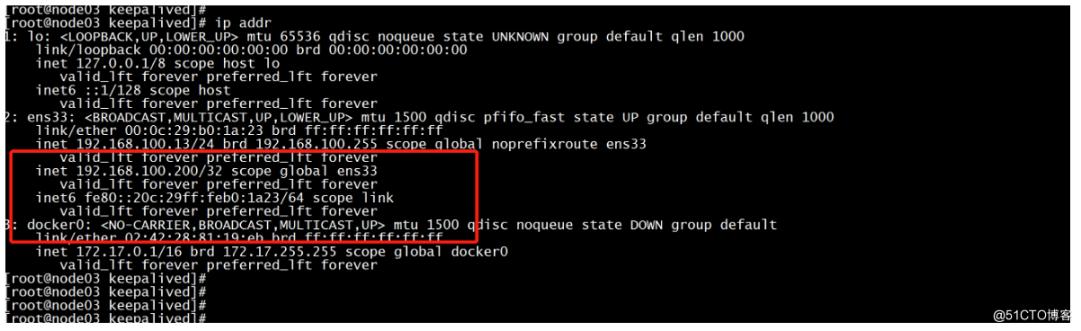

vrrp_instance VI_1 {

state BACKUP

interface ens33

mcast_src_ip 192.168.100.13

virtual_router_id 51

priority 102

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

192.168.100.200

}

track_script {

chk_apiserver

}

}

注意上述的健康检查是关闭的,集群建立完成后再开启:

track_script {

chk_apiserver

}配置KeepAlived健康检查文件:

[root@k8s-master01 keepalived]# cat /etc/keepalived/check_apiserver.sh

#!/bin/bash

err=0

for k in $(seq 1 5)

do

check_code=$(pgrep kube-apiserver)

if [[ $check_code == "" ]]; then

err=$(expr $err + 1)

sleep 5

continue

else

err=0

break

fi

done

if [[ $err != "0" ]]; then

echo "systemctl stop keepalived"

/usr/bin/systemctl stop keepalived

exit 1

else

exit 0

fi

启动haproxy和keepalived (所有master 启动)

[root@k8s-master01 keepalived]# systemctl enable --now haproxy

[root@k8s-master01 keepalived]# systemctl enable --now keepalived

!

!

集群初始化:

https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/high-availability/

各Master节点的kubeadm-config.yaml配置文件如下:

Master01:

daocloud.io/daocloud

-----

apiVersion: kubeadm.k8s.io/v1beta2

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: 7t2weq.bjbawausm0jaxury

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 192.168.100.11

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

name: node01.flyfish.cn

taints:

- effect: NoSchedule

key: node-role.kubernetes.io/master

---

apiServer:

certSANs:

- 192.168.100.200

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta2

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controlPlaneEndpoint: 192.168.100.200:16443

controllerManager: {}

dns:

type: CoreDNS

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

kind: ClusterConfiguration

kubernetesVersion: v1.20.5

networking:

dnsDomain: cluster.local

podSubnet: 172.168.100.0/16

serviceSubnet: 10.96.0.0/12

scheduler: {}

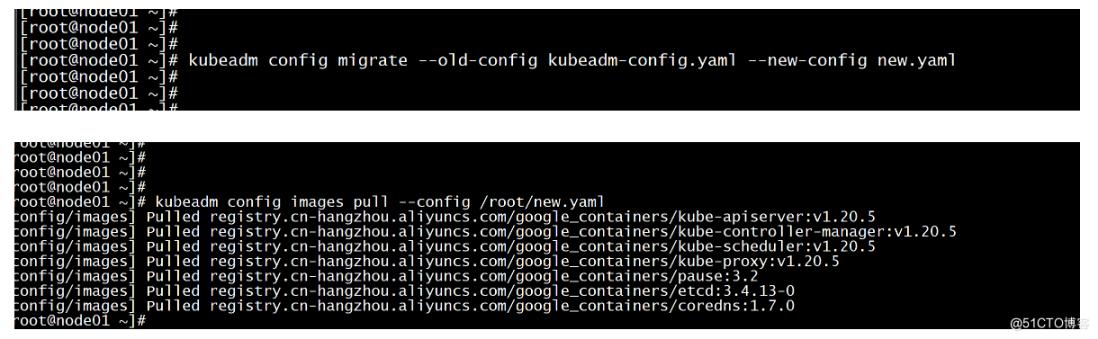

----更新kubeadm文件

kubeadm config migrate --old-config kubeadm-config.yaml --new-config new.yaml

所有Master节点提前下载镜像,可以节省初始化时间:(master 节点)

kubeadm config images pull --config /root/new.yaml

所有节点设置开机自启动kubelet

systemctl enable --now kubelet

Master01节点初始化,初始化以后会在/etc/kubernetes目录下生成对应的证书和配置文件,之后其他Master节点加入Master01即可:

kubeadm init --config /root/kubeadm-config.yaml --upload-certs

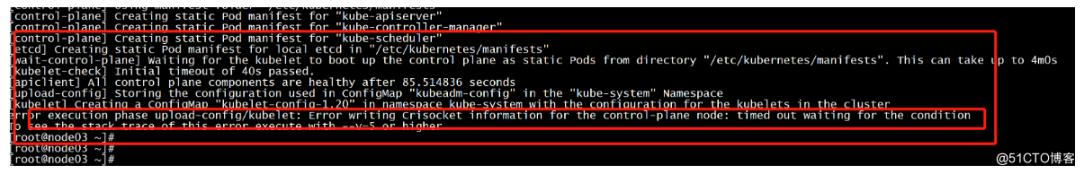

不用配置文件初始化:kubeadm init --control-plane-endpoint "LOAD_BALANCER_DNS:LOAD_BALANCER_PORT" --upload-certs初始化失败报错

error execution phase upload-config/kubelet: Error writing Crisocket information for the control-plane node: timed out waiting for the condition

To see the stack trace of this error execute with --v=5 or higher

!

!

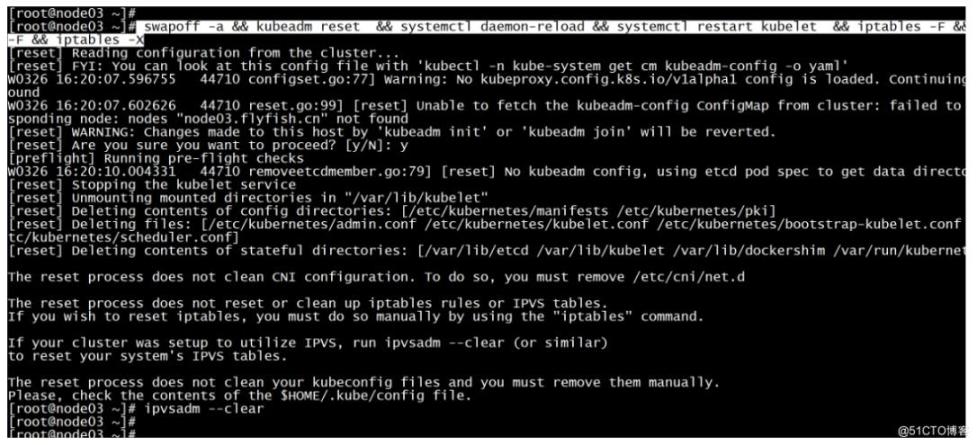

解决方法:

所有主机停掉kubelet

service kubelet stop

执行命令:

swapoff -a && kubeadm reset && systemctl daemon-reload && systemctl restart kubelet && iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X

ipvsadm --clear

!

!

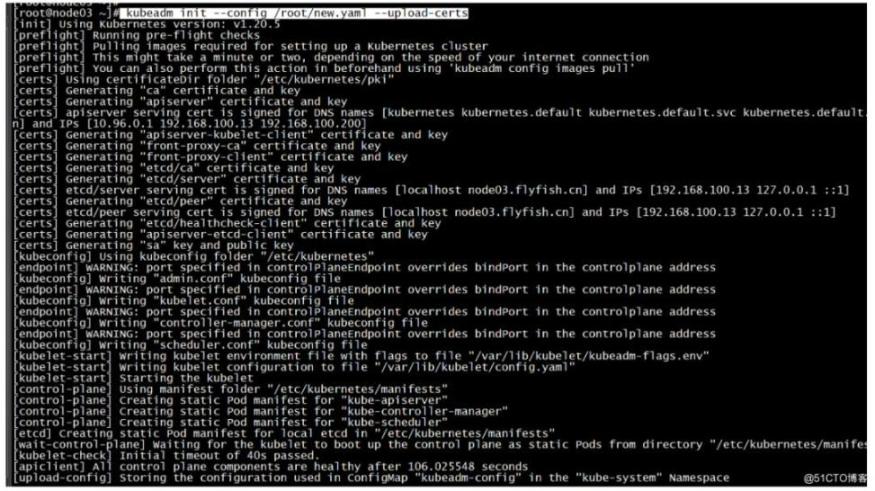

再次初始化:

kubeadm init --config /root/new.yaml --upload-certs

!

!

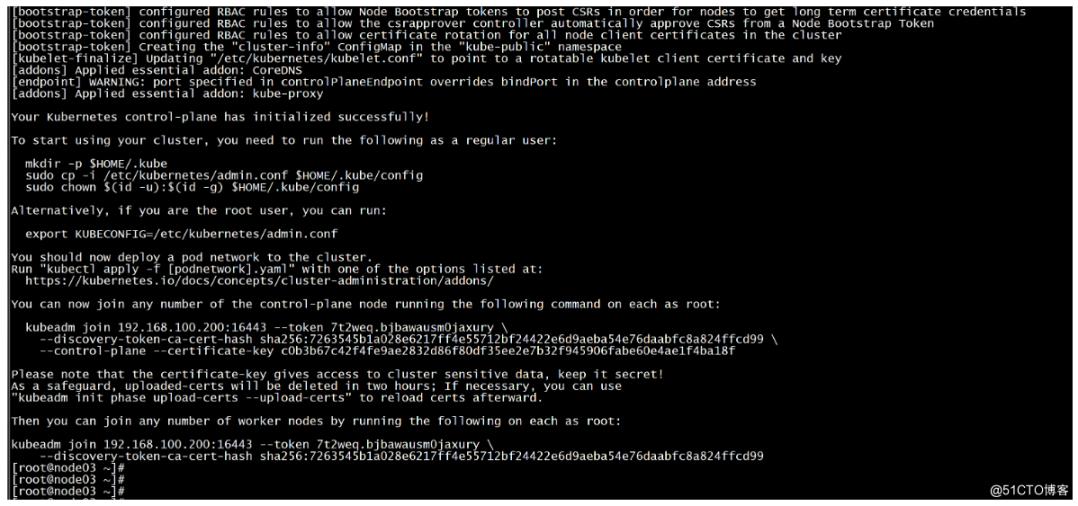

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of the control-plane node running the following command on each as root:

kubeadm join 192.168.100.200:16443 --token 7t2weq.bjbawausm0jaxury \

--discovery-token-ca-cert-hash sha256:7263545b1a028e6217ff4e55712bf24422e6d9aeba54e76daabfc8a824ffcd99 \

--control-plane --certificate-key c0b3b67c42f4fe9ae2832d86f80df35ee2e7b32f945906fabe60e4ae1f4ba18f

Please note that the certificate-key gives access to cluster sensitive data, keep it secret!

As a safeguard, uploaded-certs will be deleted in two hours; If necessary, you can use

"kubeadm init phase upload-certs --upload-certs" to reload certs afterward.

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.100.200:16443 --token 7t2weq.bjbawausm0jaxury \

--discovery-token-ca-cert-hash sha256:7263545b1a028e6217ff4e55712bf24422e6d9aeba54e76daabfc8a824ffcd99

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config所有Master节点配置环境变量,用于访问Kubernetes集群:

cat <<EOF >> /root/.bashrc

export KUBECONFIG=/etc/kubernetes/admin.conf

EOF

source /root/.bashrc

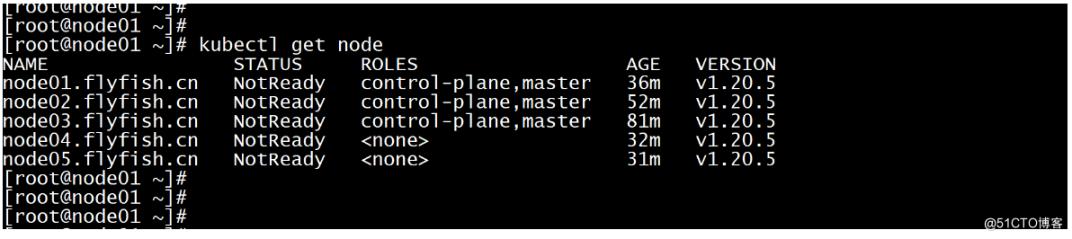

查看节点状态:

[root@k8s-master01 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 NotReady master 14m v1.12.3

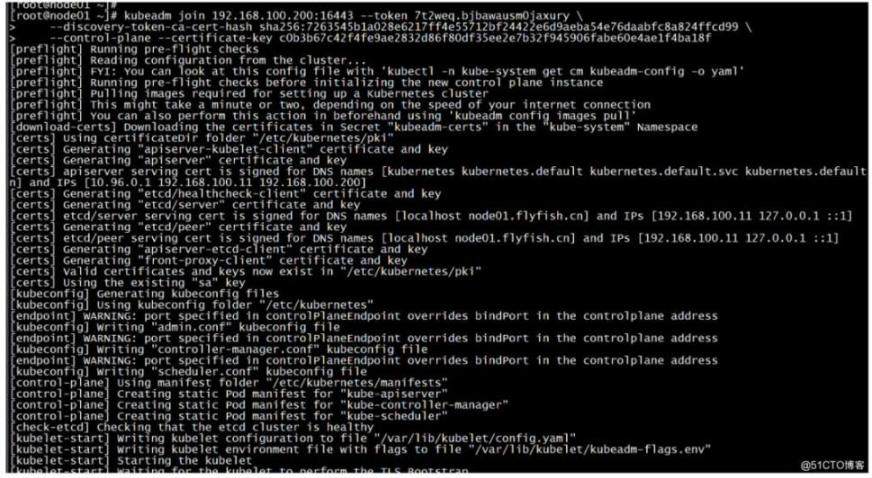

采用初始化安装方式,所有的系统组件均以容器的方式运行并且在kube-system命名空间内,此时可以查看Pod状态:其他master 加入集群:

kubeadm join 192.168.100.200:16443 --token 7t2weq.bjbawausm0jaxury \

--discovery-token-ca-cert-hash sha256:7263545b1a028e6217ff4e55712bf24422e6d9aeba54e76daabfc8a824ffcd99 \

--control-plane --certificate-key c0b3b67c42f4fe9ae2832d86f80df35ee2e7b32f945906fabe60e4ae1f4ba18f !

!

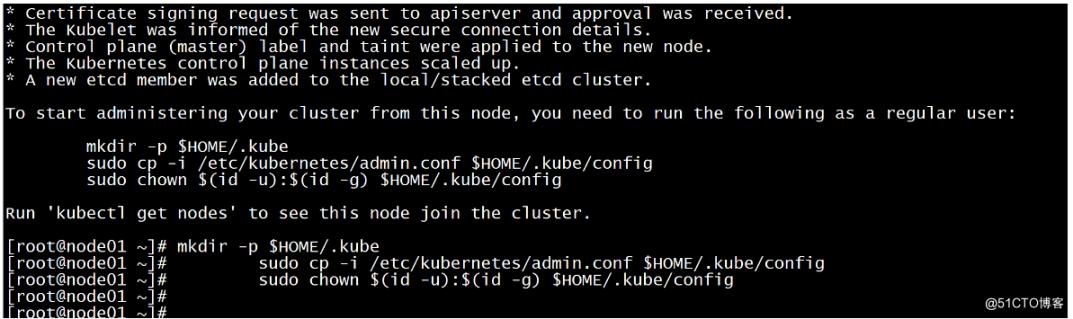

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

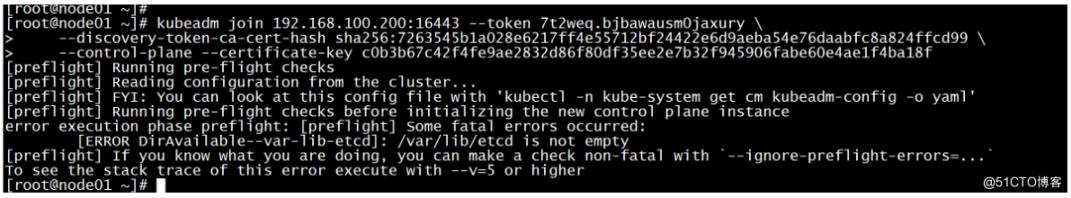

sudo chown $(id -u):$(id -g) $HOME/.kube/config加master节点报错

[ERROR DirAvailable--var-lib-etcd]: /var/lib/etcd is not empty

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

To see the stack trace of this error execute with --v=5 or higher !

!

rm -rf /var/lib/etcd在初始化:

kubeadm join 192.168.100.200:16443 --token 7t2weq.bjbawausm0jaxury \

--discovery-token-ca-cert-hash sha256:7263545b1a028e6217ff4e55712bf24422e6d9aeba54e76daabfc8a824ffcd99 \

--control-plane --certificate-key c0b3b67c42f4fe9ae2832d86f80df35ee2e7b32f945906fabe60e4ae1f4ba18f

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

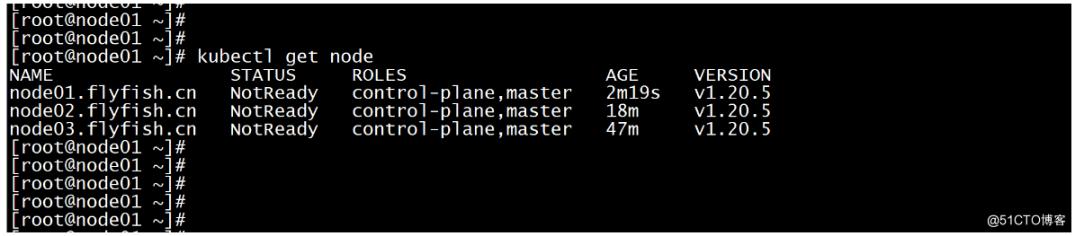

kubectl get node

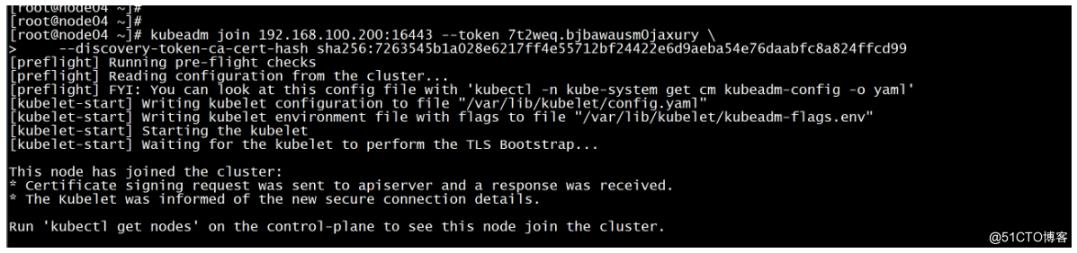

node 节点加入:

kubeadm join 192.168.100.200:16443 --token 7t2weq.bjbawausm0jaxury \

--discovery-token-ca-cert-hash sha256:7263545b1a028e6217ff4e55712bf24422e6d9aeba54e76daabfc8a824ffcd99

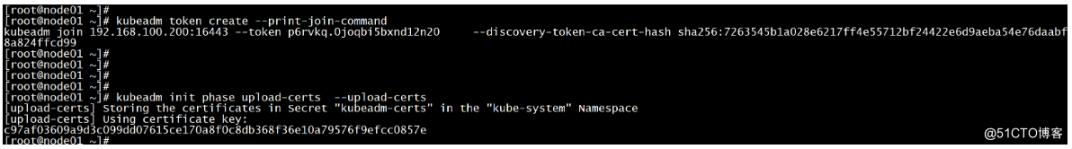

Token过期后生成新的token:(集群扩容与缩容的问题)

kubeadm token create --print-join-command

-----

kubeadm join 192.168.100.200:16443 --token p6rvkq.0joqbi5bxnd12n20 --discovery-token-ca-cert-hash sha256:7263545b1a028e6217ff4e55712bf24422e6d9aeba54e76daabfc8a824ffcd99

-----

Master需要生成--certificate-key

kubeadm init phase upload-certs --upload-certs

-----

[upload-certs] Storing the certificates in Secret "kubeadm-certs" in the "kube-system" Namespace

[upload-certs] Using certificate key:

c97af03609a9d3c099dd07615ce170a8f0c8db368f36e10a79576f9efcc0857e

----

初始化其他master加入集群

kubeadm join 192.168.100.200:16443 --token p6rvkq.0joqbi5bxnd12n20 --discovery-token-ca-cert-hash sha256:7263545b1a028e6217ff4e55712bf24422e6d9aeba54e76daabfc8a824ffcd99 --control-plane --certificate-key c97af03609a9d3c099dd07615ce170a8f0c8db368f36e10a79576f9efcc0857e

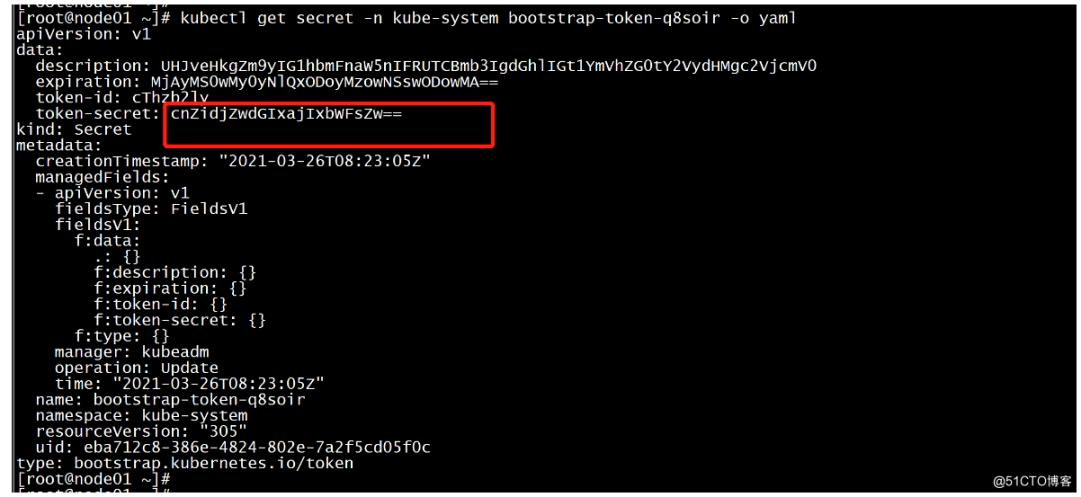

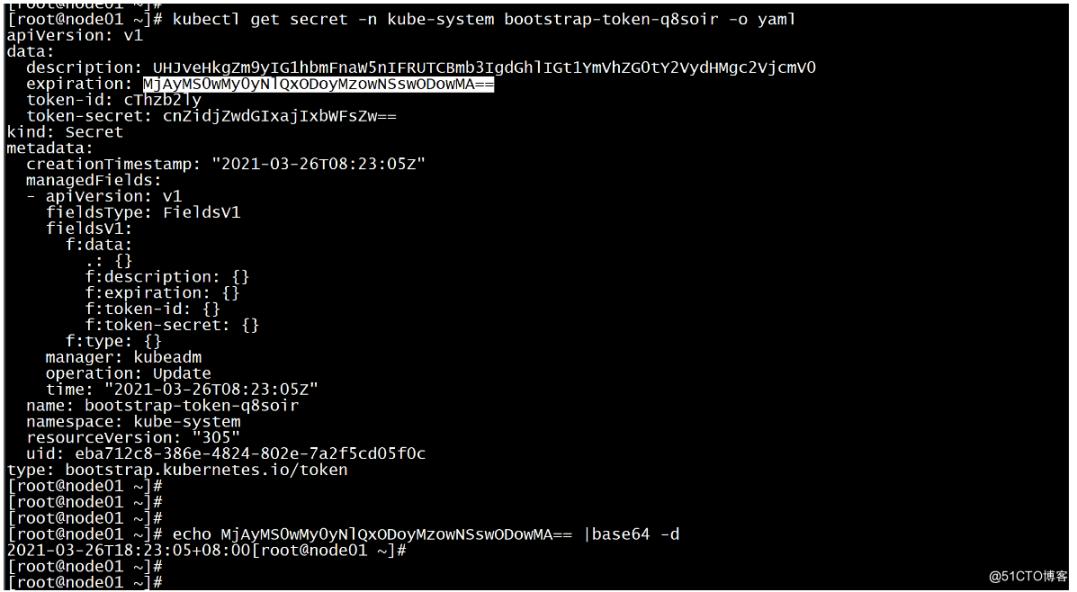

查看token的过期时间

kubectl get secret -n kube-system -o wide

kubectl get secret -n kube-system bootstrap-token-q8soir -o yaml

-----

apiVersion: v1

data:

description: UHJveHkgZm9yIG1hbmFnaW5nIFRUTCBmb3IgdGhlIGt1YmVhZG0tY2VydHMgc2VjcmV0

expiration: MjAyMS0wMy0yNlQxODoyMzowNSswODowMA==

token-id: cThzb2ly

token-secret: cnZidjZwdGIxajIxbWFsZw==

kind: Secret

metadata:

creationTimestamp: "2021-03-26T08:23:05Z"

managedFields:

- apiVersion: v1

fieldsType: FieldsV1

fieldsV1:

f:data:

.: {}

f:description: {}

f:expiration: {}

f:token-id: {}

f:token-secret: {}

f:type: {}

manager: kubeadm

operation: Update

time: "2021-03-26T08:23:05Z"

name: bootstrap-token-q8soir

namespace: kube-system

resourceVersion: "305"

uid: eba712c8-386e-4824-802e-7a2f5cd05f0c

type: bootstrap.kubernetes.io/token

----

echo MjAyMS0wMy0yNlQxODoyMzowNSswODowMA== |base64 -d

kubectl get node

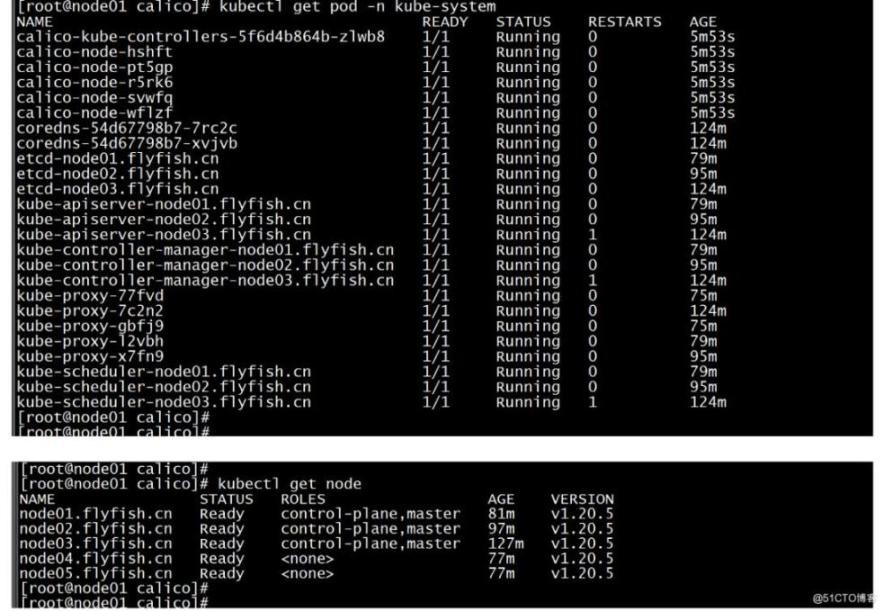

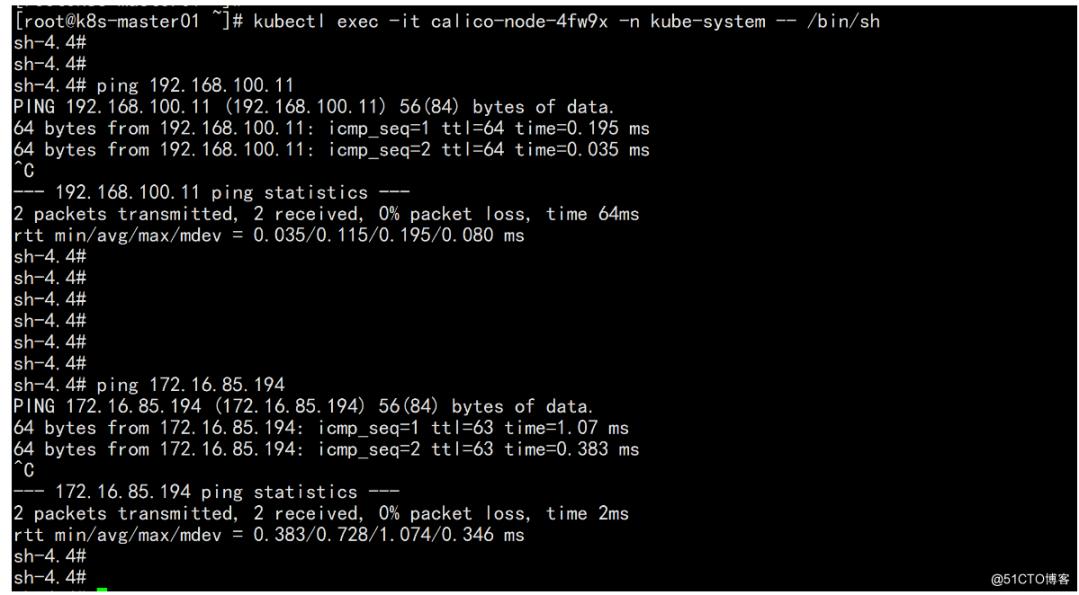

修改calico-etcd.yaml的以下位置

cd /root/k8s-ha-install && git checkout manual-installation-v1.20.x && cd calico/

-----

sed -i 's#etcd_endpoints: "http://<ETCD_IP>:<ETCD_PORT>"#etcd_endpoints: "https://192.168.100.11:2379,https://192.168.100.12:2379,https://192.168.100.13:2379"#g' calico-etcd.yaml

ETCD_CA=`cat /etc/kubernetes/pki/etcd/ca.crt | base64 | tr -d '\n'`

ETCD_CERT=`cat /etc/kubernetes/pki/etcd/server.crt | base64 | tr -d '\n'`

ETCD_KEY=`cat /etc/kubernetes/pki/etcd/server.key | base64 | tr -d '\n'`

sed -i "s@# etcd-key: null@etcd-key: ${ETCD_KEY}@g; s@# etcd-cert: null@etcd-cert: ${ETCD_CERT}@g; s@# etcd-ca: null@etcd-ca: ${ETCD_CA}@g" calico-etcd.yaml

sed -i 's#etcd_ca: ""#etcd_ca: "/calico-secrets/etcd-ca"#g; s#etcd_cert: ""#etcd_cert: "/calico-secrets/etcd-cert"#g; s#etcd_key: "" #etcd_key: "/calico-secrets/etcd-key" #g' calico-etcd.yaml

POD_SUBNET=`cat /etc/kubernetes/manifests/kube-controller-manager.yaml | grep cluster-cidr= | awk -F= '{print $NF}'`

sed -i 's@# - name: CALICO_IPV4POOL_CIDR@- name: CALICO_IPV4POOL_CIDR@g; s@# value: "192.168.0.0/16"@ value: '"${POD_SUBNET}"'@g' calico-etcd.yaml

-----

kubectl apply -f calico-etcd.yaml

kubectl get node -n kue-system

kubectl get node

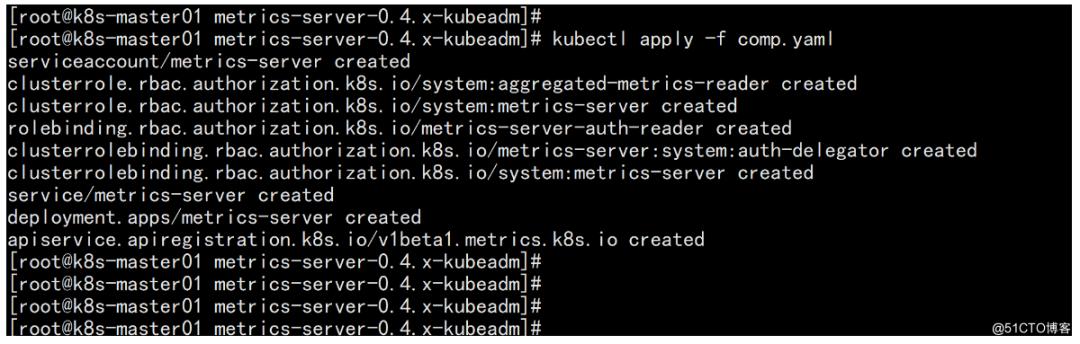

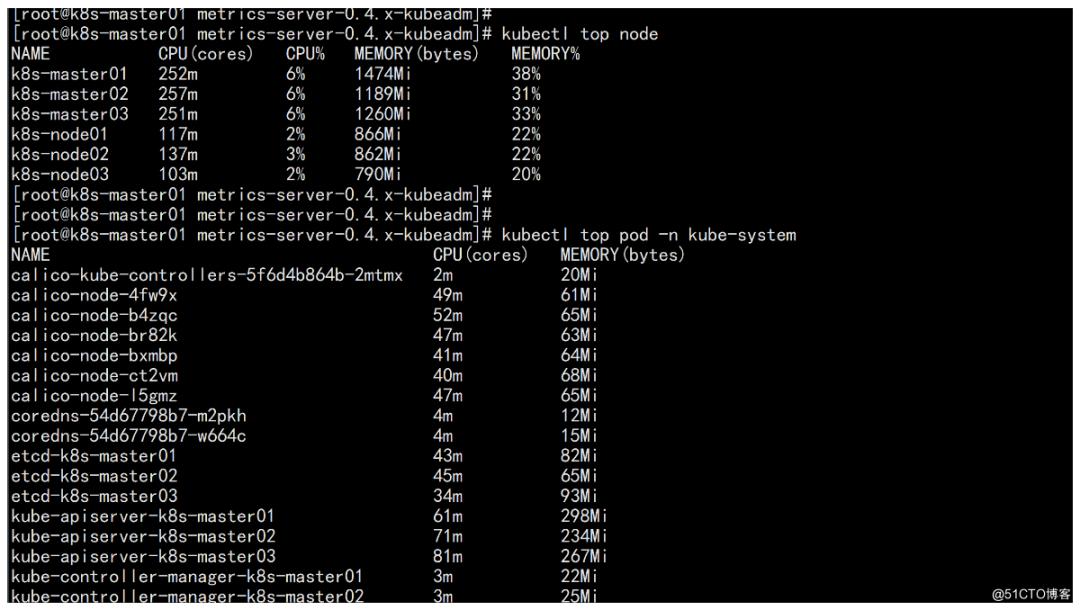

配置metric server

cd /root/k8s-ha-install/metrics-server-0.4.x-kubeadm

vim comp.ymal

----

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

rbac.authorization.k8s.io/aggregate-to-admin: "true"

rbac.authorization.k8s.io/aggregate-to-edit: "true"

rbac.authorization.k8s.io/aggregate-to-view: "true"

name: system:aggregated-metrics-reader

rules:

- apiGroups:

- metrics.k8s.io

resources:

- pods

- nodes

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

rules:

- apiGroups:

- ""

resources:

- pods

- nodes

- nodes/stats

- namespaces

- configmaps

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server-auth-reader

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: extension-apiserver-authentication-reader

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server:system:auth-delegator

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:auth-delegator

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:metrics-server

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

ports:

- name: https

port: 443

protocol: TCP

targetPort: https

selector:

k8s-app: metrics-server

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

selector:

matchLabels:

k8s-app: metrics-server

strategy:

rollingUpdate:

maxUnavailable: 0

template:

metadata:

labels:

k8s-app: metrics-server

spec:

containers:

- args:

- --cert-dir=/tmp

- --secure-port=4443

- --metric-resolution=30s

- --kubelet-insecure-tls

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

# - --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.crt # change to front-proxy-ca.crt for kubeadm

- --requestheader-username-headers=X-Remote-User

- --requestheader-group-headers=X-Remote-Group

- --requestheader-extra-headers-prefix=X-Remote-Extra-

image: registry.cn-beijing.aliyuncs.com/dotbalo/metrics-server:v0.4.1

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 3

httpGet:

path: /livez

port: https

scheme: HTTPS

periodSeconds: 10

name: metrics-server

ports:

- containerPort: 4443

name: https

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /readyz

port: https

scheme: HTTPS

periodSeconds: 10

securityContext:

readOnlyRootFilesystem: true

runAsNonRoot: true

runAsUser: 1000

volumeMounts:

- mountPath: /tmp

name: tmp-dir

- name: ca-ssl

mountPath: /etc/kubernetes/pki

nodeSelector:

kubernetes.io/os: linux

priorityClassName: system-cluster-critical

serviceAccountName: metrics-server

volumes:

- emptyDir: {}

name: tmp-dir

- name: ca-ssl

hostPath:

path: /etc/kubernetes/pki

---

apiVersion: apiregistration.k8s.io/v1

kind: APIService

metadata:

labels:

k8s-app: metrics-server

name: v1beta1.metrics.k8s.io

spec:

group: metrics.k8s.io

groupPriorityMinimum: 100

insecureSkipTLSVerify: true

service:

name: metrics-server

namespace: kube-system

version: v1beta1

versionPriority: 100

----

kubectl apply -f comp.yaml

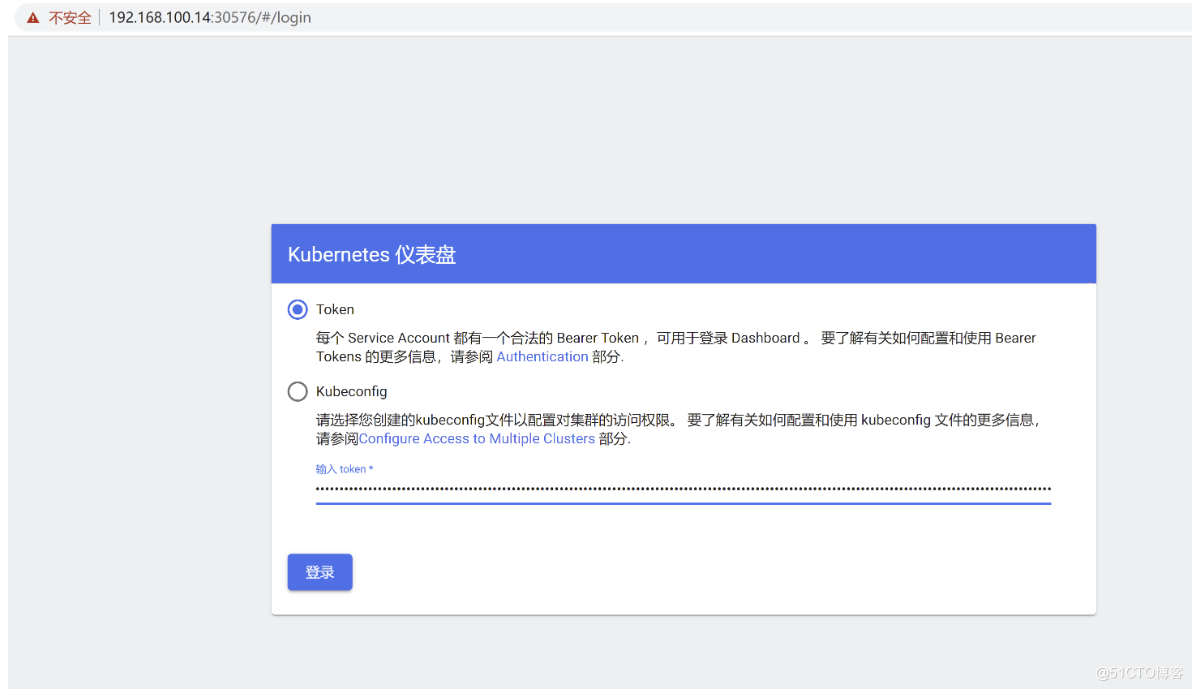

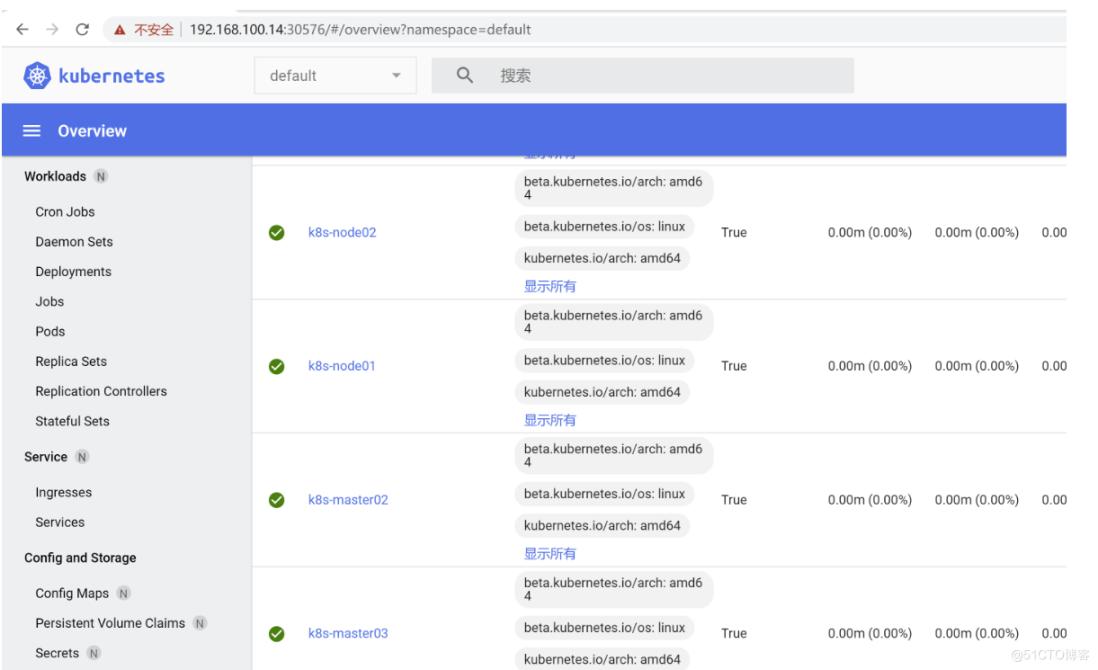

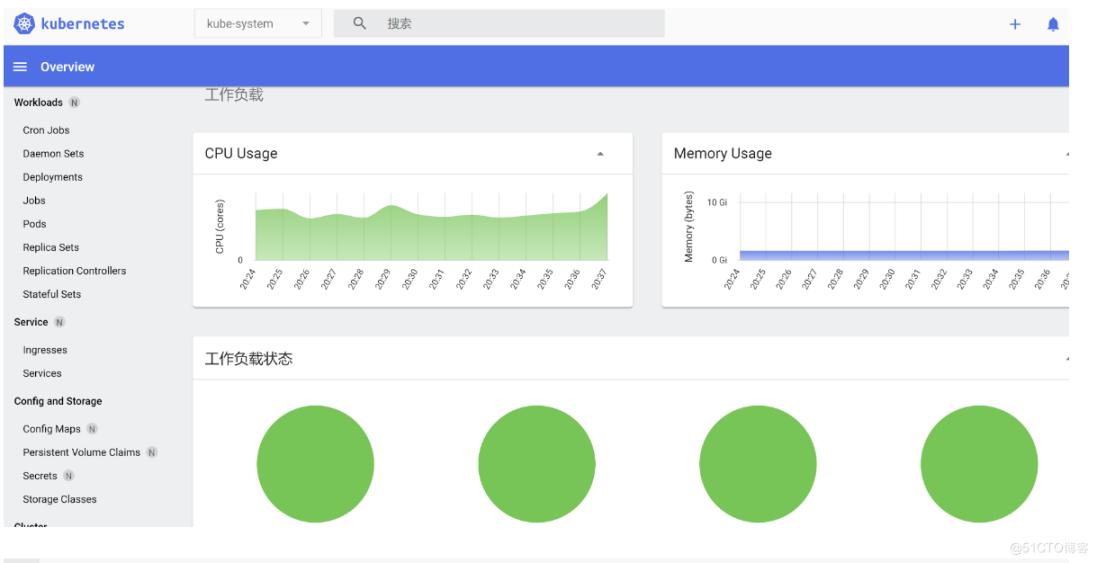

安装dashbaord

cd /root/k8s-ha-install/dashboard

kubectl apply -f dashboard-user.yaml

kubectl apply -f dashboard.yaml

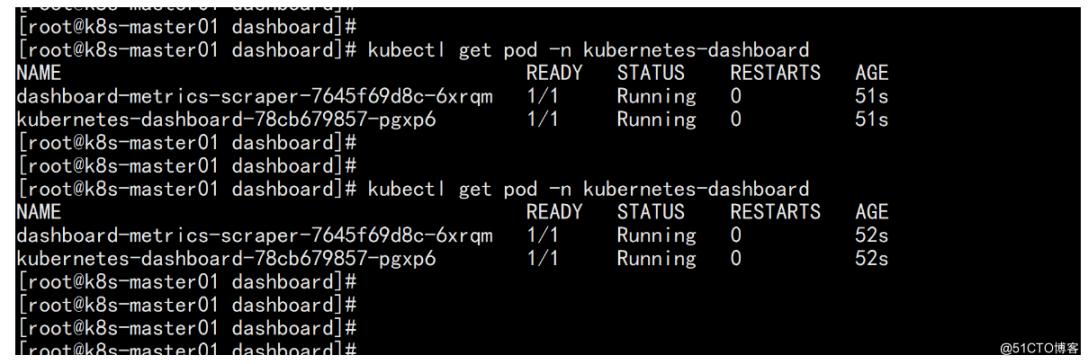

kubectl get pod -n kubernetes-dashboard

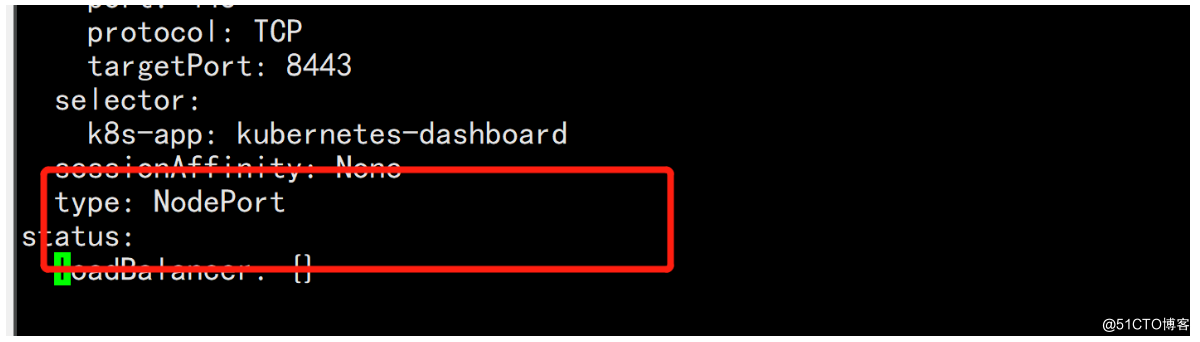

kubectl edit svc kubernetes-dashboard -n kubernetes-dashboard

改一下svc 的 类型:

type: Cluster-IP

改为: type: NodePort

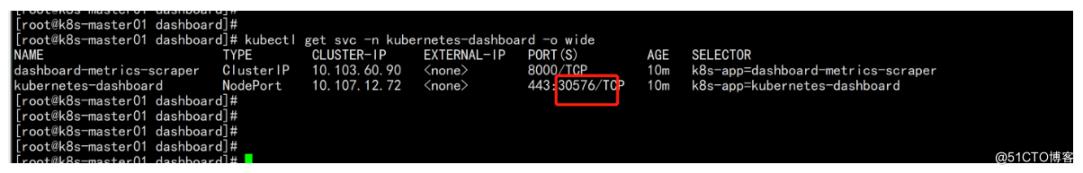

kubectl get svc -n kubernetes-dashboard -o wide

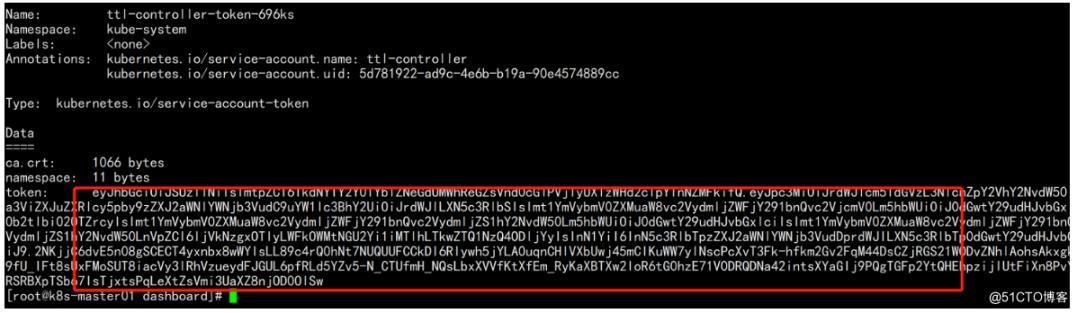

kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep admin-user | awk '{print $1}')

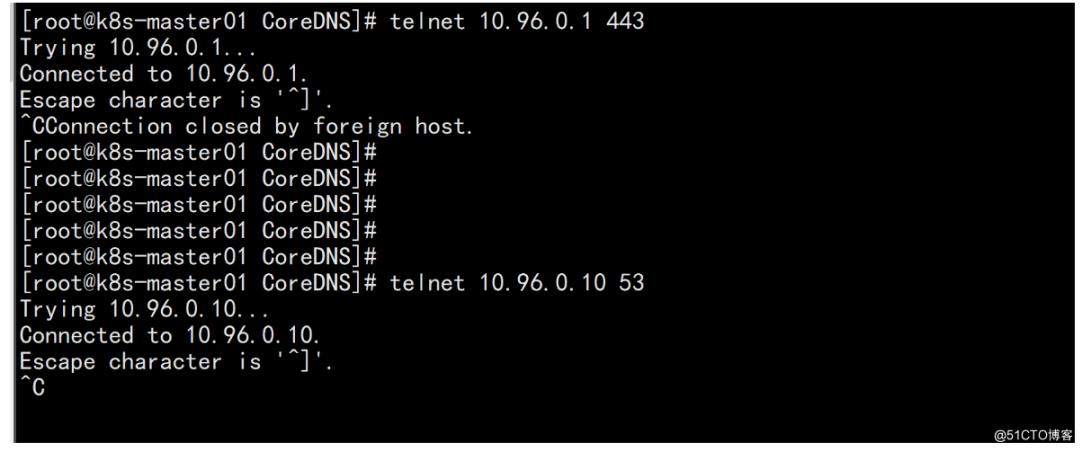

集群测试:

kubectl get svc -n kube-system

telnet 10.96.0.1 443

telnet 10.96.0.10 53

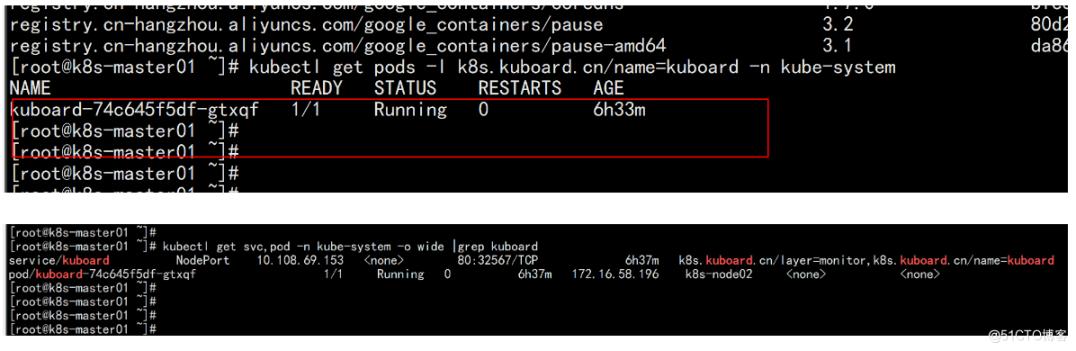

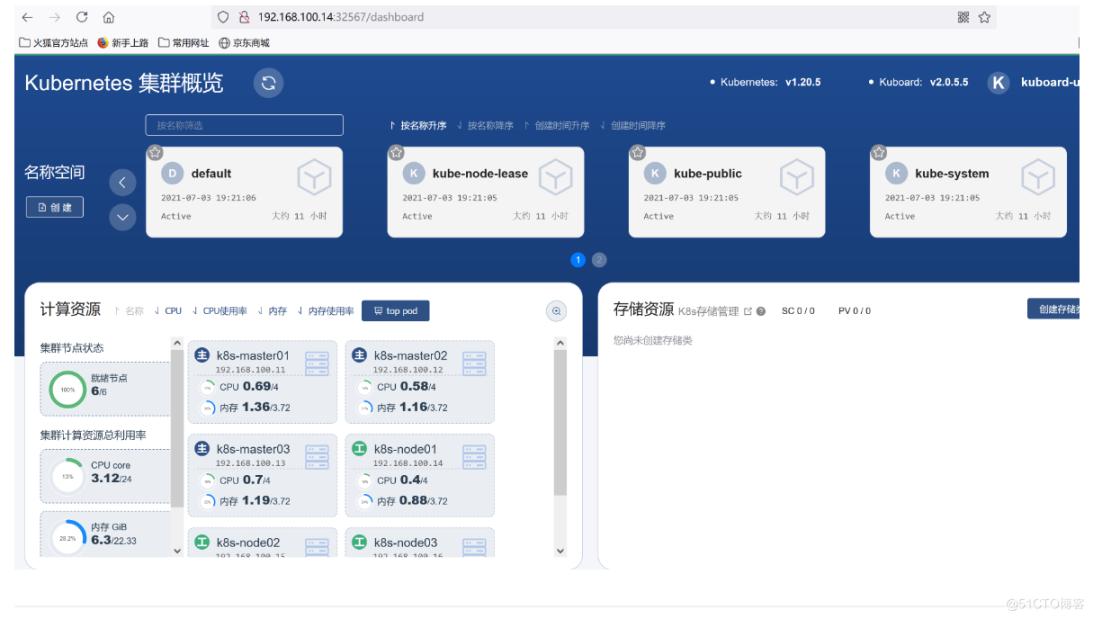

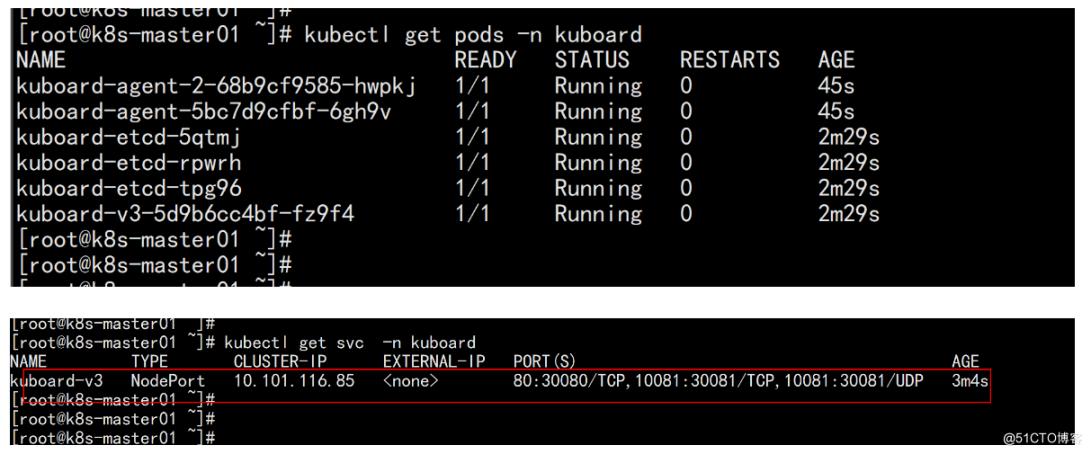

部署kuborad

在node 节点上面 下载镜像:

docker pull eipwork/kuboard:latest

kubectl apply -f https://kuboard.cn/install-script/kuboard.yaml

kubectl get pods -l k8s.kuboard.cn/name=kuboard -n kube-system

kubectl get svc,pod -n kube-system -o wide |grep kuboard

获取Token

# 如果您参考 www.kuboard.cn 提供的文档安装 Kuberenetes,可在第一个 Master 节点上执行此命令

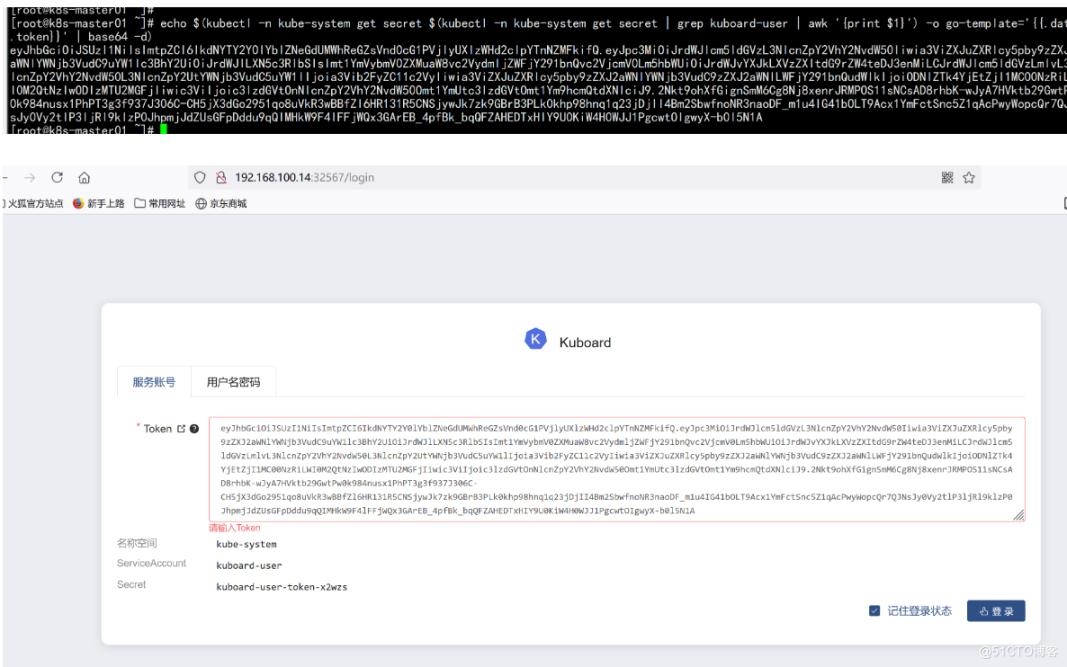

echo $(kubectl -n kube-system get secret $(kubectl -n kube-system get secret | grep kuboard-user | awk '{print $1}') -o go-template='{{.data.token}}' | base64 -d) !

!

卸载:kuborad-v2

kubectl delete -f https://kuboard.cn/install-script/kuboard.yaml安装kuboard-v3

在node 节点上面下载镜像:

docker pull eipwork/kuboard:v3

docker pull eipwork/etcd-host:3.4.16-1

mkdir /data

chmod 777 -R /data

配置镜像下载策略

wget https://addons.kuboard.cn/kuboard/kuboard-v3.yaml

vim kuboard-v3.ymal

---

imagePullPolicy: IfNotPresent (共有两处)

---

kubectl apply -f kuboard-v3.yaml

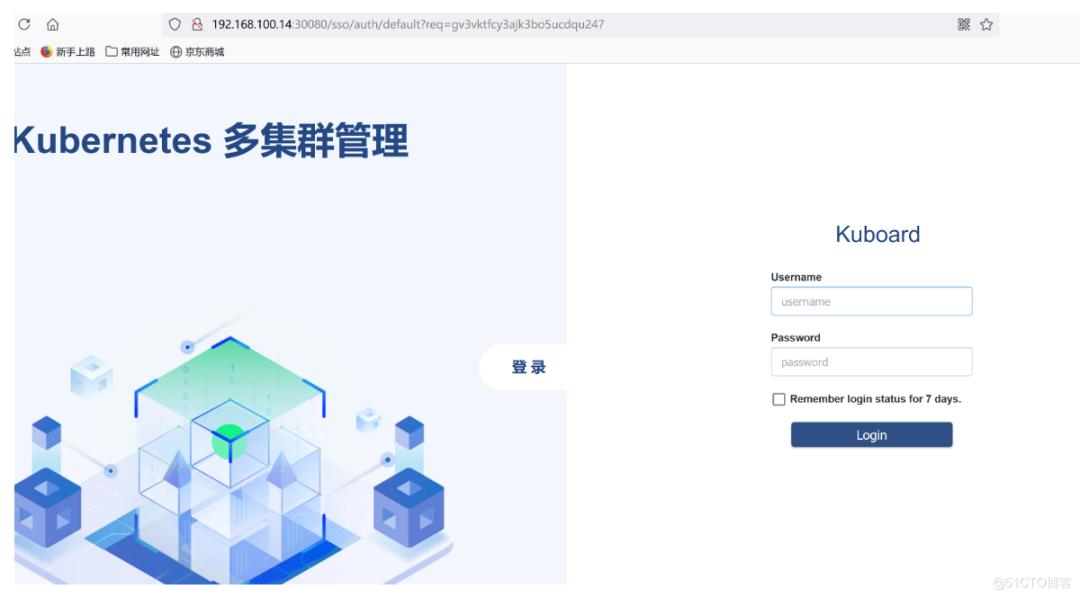

访问 Kuboard

在浏览器中打开链接 http://your-node-ip-address:30080

输入初始用户名和密码,并登录

用户名: admin

密码: Kuboard123